The Claude Code Controversy

The recent controversy surrounding Claude Code reveals hidden traps in AI product optimization. Anthropic’s three ‘well-intentioned’ optimizations—reducing reasoning intensity, clearing error caches, and overly constraining prompts—led to a performance disaster over 45 days. This article dissects the technical details and product logic, revealing the critical points between ‘fine-tuning’ and ‘collapse’ in the era of large models.

Imagine you are a surgeon, and halfway through a surgery, you realize that your scalpel has become dull—not all at once, but gradually, until one day you can’t cut through skin anymore.

You ask the supplier, and they say, “Oh, we thought the blade was too sharp and might injure the doctors, so we secretly dulled it a bit. Then we thought the handle was too heavy, so we switched to a lighter one. Finally, we found the blade was too long and hard to store, so we cut it down by two centimeters. Every step was for your benefit.”

This is what Anthropic did to Claude Code over the past 45 days.

“Claude Became Dumber”—This Time It’s Not an Illusion

Recently, the phrase “Claude became dumber” has circulated through all developer communities.

Posts on Hacker News, complaints on Reddit, and grievances on X have been rampant. Initially, users thought it was their issue—was it the prompts they wrote? Was their workflow too complicated? Some even began to doubt their programming skills.

As a user of Claude Code who writes code daily, I experienced this self-doubt too. Since mid-March, I noticed a significant decline in Claude Code’s performance: tasks that previously required one round of dialogue now took three or four; code that was once clean and concise now included unnecessary comments; and sometimes, Claude completely forgot the context we had just discussed, like an intern with amnesia.

I thought my usage was the problem and spent a weekend re-learning Anthropic’s prompt engineering guidelines.

Then on April 23, Anthropic’s Claude Code development team finally broke their silence with a post titled “An update on recent Claude Code quality reports.”

In plain language, it meant: User feedback about ‘dumbing down’ is not an illusion; we messed up.

Specifically, three seemingly ‘user-friendly’ product optimizations triggered a chain reaction, causing one of the world’s strongest programming models to suffer a prolonged performance decline for 45 days. Each of the three independent changes weakened Claude’s capabilities from different dimensions, ultimately resulting in a catastrophic effect.

Next, I will break down these three optimizations, explaining what each was, why they caused issues, and what this means for those of us developing AI products.

First Cut: Sacrificing “Thinking Time” for Speed—Users Want Fast, Not Foolish

Timeline: Launched on March 4

Let’s start with the first change, which was also the earliest.

A characteristic of large models is that the longer they think, the better their answers. This is not mystical; it’s a fundamental principle of reasoning models. The more “thinking budget” you give the model (allowing it to perform more rounds of internal reasoning), the higher quality results it can produce. It’s like taking an exam with three hours versus thirty minutes; the quality of answers will differ significantly.

Claude Code has a parameter called “reasoning intensity,” which simply controls how long the model can think. This knob has several settings: low, medium, high, and very high. Previously, the default was “high.”

Then came the complaints. Many users reported that the Opus model (the strongest version of Claude) took too long to think, sometimes causing the UI to freeze. This feedback was valid—I experienced it myself, waiting while the model thought, watching the screen spin, which was indeed frustrating.

The team’s response was to quietly adjust the default reasoning intensity from “high” to “medium.”

Note the word “quietly.” They did not specifically mention this change in the update log or notify users with a pop-up. In internal evaluations, the performance at “medium” seemed acceptable—speed improved, and the loss of intelligence appeared minimal.

But in actual use, it was a different story.

A personal insight: The difference between “slightly worse” in large models and traditional software is entirely different.

In traditional software, for example, if a button’s response time goes from 100 milliseconds to 150 milliseconds, users might not even notice. But in large models, a drop from “high” to “medium” might seem like just a few percentage points in benchmark scores, but in real development scenarios, that difference could mean the difference between “producing usable code” and “generating a mess that takes you 20 minutes to fix manually.”

To put it in less precise terms: if a chess player’s rating drops from 2800 to 2750, it still seems “super impressive” to the average person, but to other top players, the difference is glaring. Claude Code users are precisely those “top players”—professional developers who are extremely sensitive to the quality of model outputs.

After the launch, negative feedback from users began to pour in. The team took some remedial measures, such as prompting users at startup to manually adjust the reasoning intensity, adding an inline intensity selector, and even restoring an option called “ultrathink” for very high intensity.

But the problem is—most users will not change the default settings.

This is a basic principle of product design; those of us in mobile internet understand it: default values are decisions made by product managers on behalf of users, and over 80% of users will accept the default. Changing the default from “high” to “medium” effectively means making a decision to “sacrifice intelligence for speed” for 80% of users who have no idea what happened.

It wasn’t until April 7 that the team changed the default back to “high” and enabled “very high” mode by default in the newly released Opus 4.7.

This cut lasted 34 days.

Second Cut: Cost-saving Cache Clearing Became a “Memory Black Hole”—The Most Subtle, Most Damaging Cut

If the first cut made Claude a bit dumber, the second cut caused Claude to completely forget.

The technical details of this bug are somewhat complex, but I will try to explain it simply.

When you use Claude Code to write code, each round of dialogue not only produces results but also involves a lot of “internal reasoning” in the background—for example, “the user asked me to refactor this function, I previously saw that this function called module A, which has a known compatibility issue, so I need to handle that edge case during refactoring.”

These internal reasoning processes (also called reasoning chains) are retained in the dialogue history. This is crucial for maintaining contextual coherence in subsequent dialogues.

On March 26, the team launched an optimization: automatically clear old internal reasoning content after an hour of inactivity to save token costs and speed up response times.

The design intention sounds reasonable. If you leave for lunch and come back, the accumulated internal reasoning will indeed occupy the context window, so clearing some could make the model run faster and save money.

However, a fatal bug was introduced.

It was supposed to be “clear old reasoning content once after being idle for over an hour.” Instead, it became “clear old reasoning content after every subsequent dialogue once idle for over an hour.”

Feel the difference:

- Correct behavior: After being away for an hour, the system clears old records once and then works normally.

- Actual behavior: After being away for an hour, the system clears previous memories after every single statement you make.

What does this mean? It means that once this bug is triggered, Claude Code can only remember the content of the most recent dialogue. It completely forgets why it modified the code, what files it saw before, and what decisions it made.

Users noticed that Claude suddenly began repeating the same phrases, giving contradictory advice, and repeatedly asking questions that had already been answered. It was like a colleague who forgets every five minutes, forcing you to explain the project background from scratch each time.

Even worse, this bug had a “hidden damage”: due to the constant cache clearing, a large number of cache misses occurred. Normally, similar dialogue contexts could reuse previous caches, saving time and money. But now, every round was “brand new,” meaning each statement had to be recalculated from scratch.

The result was: users’ usage limits were consumed rapidly, even though they weren’t doing anything particularly special, their flow was gushing out.

Why did this bug take so long to discover? Anthropic provided an explanation in the report that was both amusing and frustrating—

At the time, there were two unrelated experiments running simultaneously. One was a server-side message queue experiment, and the other was a change in the way reasoning chains were displayed. The existence of these two experiments masked the symptoms of this cache-clearing bug. It was like a patient taking three medications at once, where the side effects of two masked the allergic reaction of the third until the allergy became severe enough that it couldn’t be hidden anymore, prompting the doctor to discover the problem.

Ultimately, the team took over a week to pinpoint the root cause and fixed it on April 10.

An interesting detail during the investigation was that the team used the latest Opus 4.7 model to review the problematic code, and Opus 4.7 successfully identified the bug. The previous Opus 4.6 could not. In a sense, Anthropic “used the new Claude to fix the mess created by the old Claude.”

This cut lasted 15 days.

Third Cut: Trying to Reduce Verbosity Resulted in “Dull”—A Single Prompt Cut 3% of Intelligence

The third issue lay with the system prompts.

The Opus 4.7 version produced more output than its predecessor—while performing better on difficult problems, the output was noticeably more verbose. Those who have worked on large model products know that verbosity is a common issue, and user tolerance for it is very low.

To address this problem, the team added a constraint to the system prompt:

“Text control between tool calls should be within 25 words. Final responses should be limited to 100 words unless the task genuinely requires more detail.”

This sentence was internally tested for several weeks, and no performance decline was observed on Anthropic’s own evaluation set, so it was launched with Opus 4.7 on April 16.

However, the team later conducted larger-scale ablation testing—essentially deleting the system prompts line by line and observing the impact on model performance with each deletion—and found that this constraint led to approximately a 3% performance drop across all model versions.

3% might not sound like much, right?

But when combined with the existing two issues—the downgrade in reasoning intensity leading to intelligence loss and the cache-clearing bug causing context loss—this 3% became the last straw that broke the camel’s back. Users did not perceive it as “3% + a few percentage points” in arithmetic addition, but rather as a systemic, comprehensive feeling that “this thing is not working anymore.”

On April 20, the team urgently revoked this prompt.

This cut lasted 4 days.

Notably, these 4 days coincided with the window when Opus 4.7 was just released, and global developers flocked to try it out. The first impression for new users was, “How is this highly anticipated strongest model performing so poorly?”

What Happened in 45 Days: The Disaster Timeline of Three Cuts

Looking at the three issues together, the timeline is as follows:

- From March 4 to April 7 (34 days), reasoning intensity was stealthily downgraded, and Claude became comprehensively dumber.

- From March 26 to April 10 (15 days), the cache-clearing bug caused Claude to forget while rapidly consuming user quotas.

- From April 16 to April 20 (4 days), the overly constraining prompt further compressed the model’s expression and reasoning space.

From March 4 to April 20, these three cuts overlapped, with 12 days (from March 26 to April 7) seeing two cuts active simultaneously, and 4 days (from April 16 to April 20) seeing the last cut compounded.

Throughout this process, none of the changes were “malicious.” Each optimization had a reasonable starting point: speeding up, saving costs, reducing verbosity.

But the ultimate result was that users experienced a continuous and irreversible intelligence degradation for 45 days.

This reminds me of an old joke: a person goes to a barber and says, “Just give me a trim.” The barber first trims one side, thinks it’s asymmetrical; then trims the other side, still thinks it’s asymmetrical; keeps trimming the left side… until the person ends up bald.

Every step was a “fine-tuning,” every step made sense, but the cumulative effect was devastating.

Users Are Not Buying It: The Hurt of Late Truth

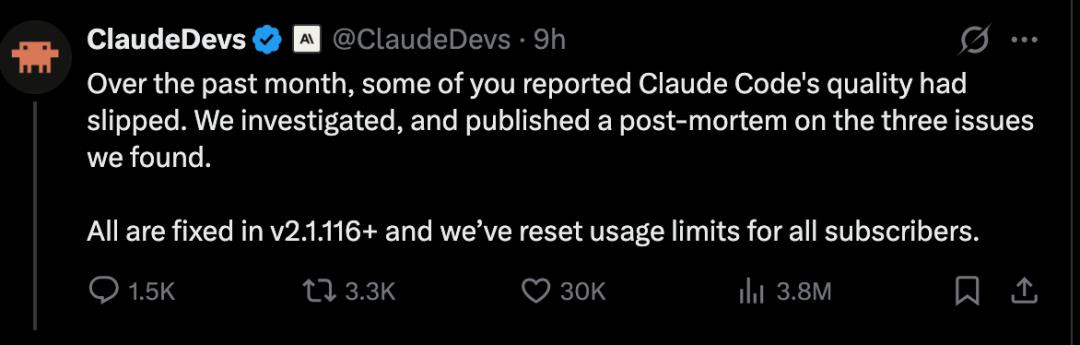

On April 23, Anthropic released this post-analysis report and announced the reset of usage limits for all subscription users as compensation.

In theory, admitting problems, publicly sharing technical details, and providing compensation is a relatively sincere approach in the industry. However, the developer community reacted even more harshly.

Why? Because there are three points that are hard to swallow:

First, the “reset limit” compensation is too perfunctory.

Some users posted screenshots on X showing that they paid hundreds of dollars for premium subscriptions each month, and due to the cache bug, their limits were consumed rapidly, while Anthropic’s compensation was simply resetting the limits. Ironically, some found that the reset time always coincided with just before the limit was about to expire, effectively giving you an extra day when your monthly card was nearly up.

Someone calculated that they had paid about $2400 in subscription fees to Anthropic over the past year, only to experience a collapse in service due to the company’s own bug, and the compensation was a trivial limit reset. This kind of “compensation” is hard to feel sincere.

Second, the timing of the release is too “convenient.”

The day the post-analysis report was released happened to be the same day OpenAI launched GPT-5.5. In the AI circle, such “coincidental” timing is hard not to raise suspicions. Some directly questioned whether they were trying to release bad news while everyone was focused on GPT-5.5 to divert attention.

Of course, it might just be a coincidence. But when trust is already shaky, any “coincidence” will be interpreted as a “calculation.”

Third, the pre-communication stance was disheartening.

During the 45 days before formally acknowledging the issue, the community continually reported that “Claude became dumber.” Anthropic’s official stance was always that “the model has not degraded.”

Imagine this feeling: you paid a high price for a tool, and while using it, you find it’s not working well, so you reach out to the vendor, who says, “You’re mistaken; we have no issues.” After doubting yourself for a month and a half, the vendor finally tells you, “Oh, it’s indeed our problem.”

One user on X expressed it well: “You made me doubt myself for two weeks; I thought my prompts were poor, my workflow was flawed, and even began to question my abilities. In the end, the problem was on your side? And you think a limit reset will appease me?”

The most heartbreaking part is that some users have begun to vote with their feet. Some reported switching to OpenAI’s Codex and having a great experience, considering a complete change of their toolchain. It’s worth noting that getting a heavy user to abandon a deeply integrated tool is extremely difficult; once they leave, the cost of bringing them back is 5 to 10 times that of initial acquisition.

Why Did No One Discover This Internally?—A Reflection for Everyone in AI Product Development

What shocked me most was not the bug itself—what software doesn’t have bugs? What shocked me was that these bugs went undetected internally.

Anthropic provided some explanations in the report:

- The cache bug was difficult to reproduce due to interference from two internal experiments.

- The downgrade in reasoning intensity seemed to have minimal impact on internal evaluation sets.

- The prompt constraint did not trigger performance declines on their own evaluation sets.

But peeling back the layers, the root cause is simple: Internal developers were not using the public release version.

Anthropic’s internal staff used versions with various experimental features, not the public version installed by ordinary users. This means that the product experienced by them was not the same as that experienced by users from the outset.

This issue has a classic name in the software industry: “dogfooding”—meaning your team should use your own product to truly understand user pain points.

Anthropic also acknowledged this issue in the report, stating they would promote more internal employees to use the public release version. But honestly, such commitments have been heard too often in the industry.

As someone who has worked in AI products for several years, I want to share a personal experience: our team previously developed a document processing tool based on large models, and the internal demo worked exceptionally well; everyone thought there were no issues. However, on the first day of launch, users were harshly criticized—because the documents we tested were well-formatted PDFs, while real users were throwing in crooked phone screenshots, scanned documents, and even PPT screenshots pieced together into a Word document.

The gap between evaluation sets and the real world is always larger than you think.

Anthropic’s Improvement Plans: The Right Direction, But Is It Enough?

At the end of the report, Anthropic outlined three improvement measures. Here’s my take on each:

Improvement One: Mandate internal employees to use the public release version.

The direction is entirely correct. However, the execution is much more challenging than it sounds. Internal employees need to test new features, making it impossible to use the public version 100% of the time. The key is to establish a systematic rotation mechanism between the “internal test version” and the “public version”—for instance, at least one week each month must be spent using the public version, with usage reports required.

Good intentions alone are not enough; there needs to be process assurance.

Improvement Two: Conduct ablation testing for every line modification in system prompts.

This is the most valuable technical lesson from this incident. Ablation testing involves deleting the prompt line by line and observing the impact on model output with each deletion. It sounds simple, but the actual workload is enormous—complex system prompts may have dozens or hundreds of lines, and each line requires a full evaluation run.

But this investment is worthwhile. This incident proved that for large models, every word in the system prompt can have a butterfly effect. A seemingly insignificant constraint might lead to severe performance degradation in certain scenarios.

Improvement Three: Introduce a “soaking period” and gradual rollout for any changes that might sacrifice intelligence.

This is also the right direction. Everyone is familiar with the gray release of traditional software—first releasing to 1% of users, observing the data, and gradually expanding if everything is fine. Large model products require this mechanism even more, as evaluation sets can never cover the complexity of real usage scenarios.

But how long should the soaking period be? How should the gray ratio be determined? Anthropic did not clarify these details in the report, and I believe more specific plans are needed in the future.

Additionally, Anthropic has opened an official account @ClaudeDevs on X to communicate product decisions with the developer community. This is a positive step, but whether they can maintain this and to what extent remains to be seen.

What This Means for Us in AI Product Development—Five Practical Methodologies

As someone who personally experienced this storm, I believe the lessons from this incident go beyond just “Anthropic made mistakes.” There are many universal methodologies applicable to every team developing large model products.

I summarize five:

First: Never change default values secretly.

This is the most basic and easily overlooked product principle. Users choose your product based on their current perceived experience. If you secretly change the reasoning intensity from “high” to “medium,” it’s like a coffee shop secretly reducing the espresso shots in an Americano from two to one and a half—you might think the difference is negligible, but regular customers can taste it immediately.

If you must change default values, at least do two things: clearly state it in the update log and provide users with a one-click option to restore the old default.

Second: The “performance-cost-experience” triangle in large model products cannot be balanced using traditional software thinking.

Performance optimization in traditional software usually involves Pareto improvements—optimizing database query speed improves user experience and reduces server costs, leading to a win-win.

But large models are different. In large models, speed, cost, and intelligence often represent a zero-sum game. If you want the model to be faster, you have to sacrifice depth of thought; if you want to save tokens, you might lose contextual coherence; if you want the output to be more concise, you might compress critical reasoning processes.

Therefore, when making any optimizations involving these three dimensions, you must answer a soul-searching question: If this optimization only makes 10% of users happy but worsens the experience for 50%, would you still do it?

The answer is usually no—or at least make it an optional feature rather than changing the default.

Third: Evaluation sets are never enough; real user testing is irreplaceable.

Anthropic’s three optimizations all “seemed fine” on internal evaluation sets. But in the real environment, they all encountered problems.

The lesson here is: do not blindly trust evaluation sets. No matter how comprehensive they are, they only represent a subset of real usage scenarios, and a carefully curated subset at that. Real users will do far more diverse, chaotic, and unpredictable things than you can imagine.

My suggestion is: for any changes that might impact the core capabilities of the model, in addition to running evaluation sets, conduct “real-world pressure testing”—find 10 to 20 heavy users and have them use the modified version in real work for at least a week, collecting qualitative feedback. This is more effective than running a thousand evaluation cases.

Fourth: Cache and context management are the “lifeblood” of large model products; changes require the highest level of code review.

The cache-clearing bug in Claude Code was fundamentally a “seemingly simple but extremely complex” context management issue. Such problems are common in all large model products.

I have seen too many large model products stumble in context management: dialogue history being inexplicably truncated, long documents forgetting the first half halfway through processing, contradictions in multi-turn dialogues…

If you are developing large model products, I suggest marking all code modules related to “context,” “memory,” and “cache” as “core red zones”—any changes require at least two senior engineers to cross-review, and they must be tested in various edge scenarios (like resuming after being idle for 1 hour, 5 hours, or 24 hours).

You might also want to look into open-source frameworks like LangGraph and MemGPT that specialize in large model memory management; they have developed several mature solutions for context persistence and layered memory worth referencing.

Fifth: When problems arise, communicate honestly with users immediately; don’t wait for the “best timing.”

Anthropic’s biggest PR mistake this time was not the bug itself, but the decision to publicly acknowledge the issue only after 45 days of community feedback. Moreover, they chose to release the report on the same day as a competitor’s new product launch, further undermining their sincerity.

In the AI industry, user trust is extremely fragile. These users are not ordinary consumers; they are developers who have deeply integrated your model into their workflows, and their productivity and income directly depend on your product’s stability.

When you know there’s a problem with the product, the best time to communicate is always “now”—even if you haven’t fully figured out the cause. You can say, “We have noticed a problem, are investigating, and our preliminary findings are this and that, with an expected update time.” This is a hundred times better than remaining silent for 45 days and then suddenly dropping a “perfect report.”

In Conclusion: Technological Leadership Is Just the Entry Ticket

One question I keep pondering is: if Claude were not “one of the world’s strongest programming models,” would this incident have caused such a significant uproar?

The answer is likely no.

It is precisely because Claude Code represents the pinnacle of programming assistance tools that user expectations have been raised to the highest level. When this pinnacle suddenly crumbled, it fell squarely on the most loyal, highest-paying, and deeply reliant core users—their reactions were naturally the most intense.

This incident reveals a harsh reality that many AI practitioners may not yet realize: As competition in large models heats up, the lead time for technological capabilities is getting shorter. Today you are the strongest, but three months from now, others might catch up.

The real moat is not being number one in benchmarks, but whether you can maintain user trust when problems arise with your product.

OpenAI has had similar lessons (remember the “laziness” incident with GPT-4), and Google’s Gemini has also stumbled. No company in the industry can guarantee that their models will remain stable forever.

What users can accept is, “Tell me what went wrong, how you fixed it, and how you will avoid it in the future.” What users cannot accept is, “You secretly changed things, denied there were problems, and only acknowledged it when I was about to give up on you.”

For those of us developing AI products, the biggest lesson from this incident can be summed up in one sentence:

You can make technical mistakes, but you cannot make communication mistakes. Bugs can be fixed, but trust cannot.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.